Are Large Language Models Really Encoding Human Wisdom?

With less than 10% of human knowledge digitized and available online, can we really claim that Large Language Models encode humanity's wisdom?

The Knowledge Paradox: Are Large Language Models Really Encoding Human Wisdom?

As artificial intelligence continues to evolve at a breathtaking pace, there’s a growing belief that Large Language Models (LLMs) have somehow become vast repositories of human knowledge. But is this really true? As someone deeply immersed in the field of generative AI, I’ve been grappling with a fascinating paradox that challenges this assumption.

The Iceberg of Human Knowledge

Think of human knowledge as an immense iceberg. What we see on the internet – the training ground for LLMs – is merely the tip breaking through the surface. Conservative estimates suggest that less than 10% of all human knowledge is currently digitized and available online. This digital fragment represents just a small window into humanity’s collective wisdom.

To understand this gap, we need to look at different types of knowledge and their digital representation. Of traditionally documented knowledge – books, papers, and written records – only about 30-40% has been digitized. For instance, Google Books, one of the largest digitization projects in history, has scanned approximately 40 million books out of an estimated 130 million unique books ever published.

The disparity becomes even more striking when we consider tacit knowledge – the kind of expertise that resides in human experience and practice. Only 5-10% of this knowledge, which includes professional expertise, craft techniques, and cultural practices, has been captured in digital form. For embedded knowledge – the wisdom contained within organizational processes, community practices, and indigenous traditions – the digital footprint is even smaller, estimated at less than 5%.

Moreover, the knowledge available online shows significant biases. It tends to be:

- Skewed toward recent decades

- Dominated by Western perspectives and English-language content

- Focused on academic and scientific domains

- More representative of urban rather than rural knowledge

- Concentrated in technologically advanced societies

Beneath these visible digital waters lies a vast expanse of wisdom: generations of oral traditions, the intuitive expertise of master craftspeople, proprietary research locked away in corporate vaults, and countless personal insights that have never been digitized.

This reality presents a crucial question: How can we claim that LLMs encode human civilization’s knowledge when they’ve only been exposed to such a small, skewed fraction of it?

Beyond Simple Storage: Understanding LLM Capabilities

However, this observation leads us to a more nuanced understanding of what LLMs actually do. Unlike traditional search engines that simply retrieve information, LLMs operate more like pattern recognition systems with a remarkable ability to synthesize and connect ideas. They don’t just store knowledge; they learn to recognize relationships and generate new insights from the patterns they’ve observed.

This distinction is crucial. While an LLM might not have access to all human knowledge, it can sometimes arrive at conclusions or make connections that weren’t explicitly present in its training data – much like how human experts can apply their understanding to novel situations.

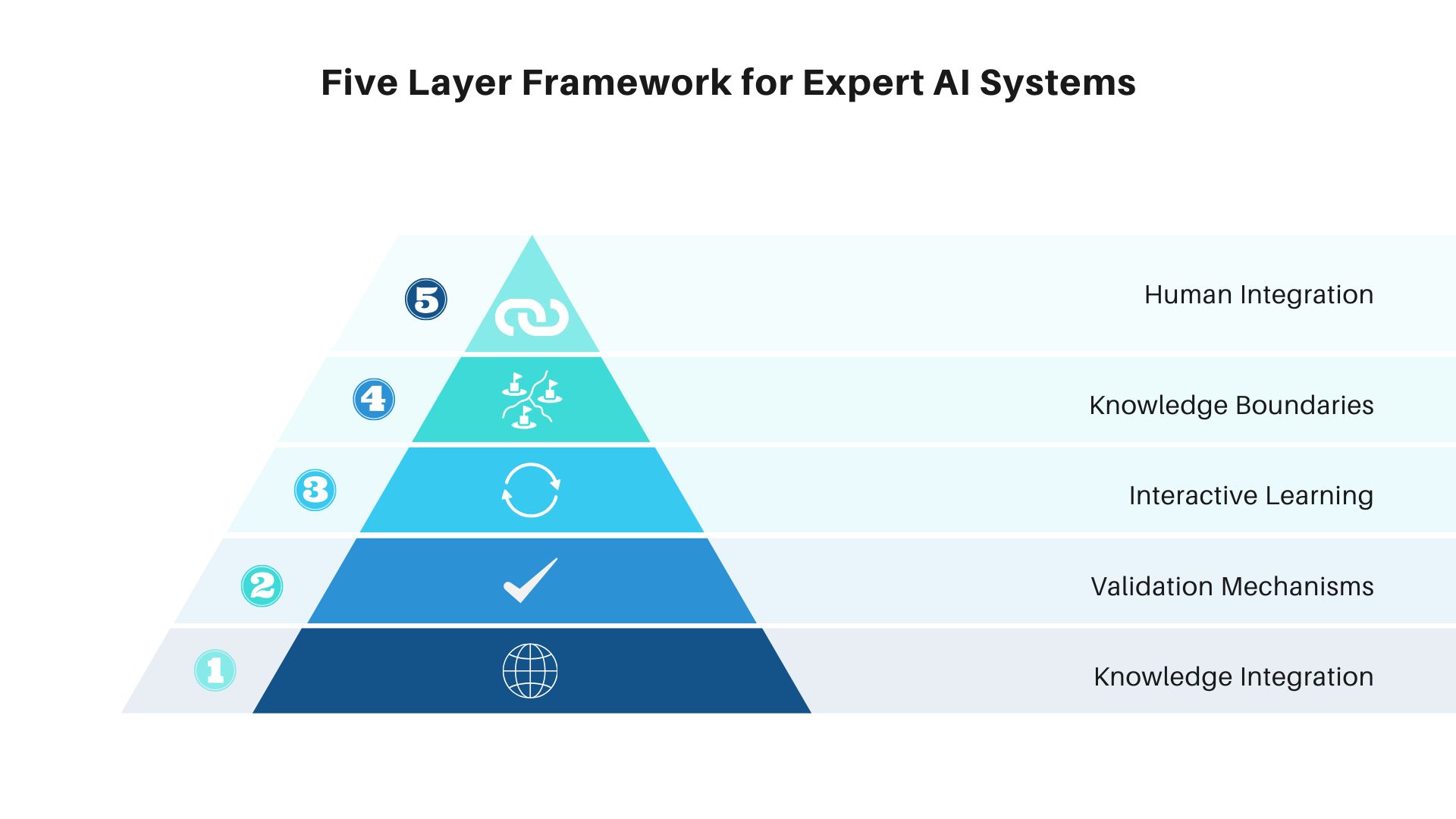

The Path Forward: A Framework for Expert AI Systems

This realization points us toward a more sophisticated approach to developing AI systems that can truly capture and utilize specialized knowledge. Instead of pursuing the myth of a single, all-knowing system, we should focus on creating networks of specialized expert systems that can work in concert with human expertise.

Let me outline a comprehensive five-layer framework for developing truly expert AI systems:

Image created with Canva

Image created with Canva

Layer 1: Knowledge Integration

The foundation begins with systematic knowledge integration. This layer involves not just feeding the system with technical documentation, research papers, and textbooks, but also developing innovative methods to capture tacit knowledge – the unwritten wisdom that experts accumulate through years of experience. We need new approaches to encode expert decision-making processes, practical heuristics, and the subtle nuances of professional judgment that often go undocumented.

Layer 2: Validation Mechanisms

The second layer establishes robust validation systems. This goes beyond simple accuracy checks to include sophisticated testing frameworks that simulate real-world scenarios experts encounter. Domain experts would need to design these tests, evaluating not just factual correctness but also the presence of nuanced understanding that characterizes true expertise. Think of this layer as creating a comprehensive “clinical trials” system for expert knowledge.

Layer 3: Interactive Learning

The third layer implements dynamic learning capabilities. Just as human experts refine their knowledge through practice, expert LLMs need environments where they can “practice” their expertise under controlled conditions. This layer would include simulation environments where systems can apply their knowledge to complex, realistic scenarios and receive meaningful feedback for improvement. It’s about creating a continuous learning loop that mimics how human experts develop their skills over time.

Layer 4: Knowledge Boundaries

The fourth layer focuses on establishing clear knowledge boundaries. A hallmark of true expertise is understanding the limits of one’s knowledge. This layer develops mechanisms for expert LLMs to recognize and clearly communicate when a problem exceeds their expertise. It includes sophisticated self-assessment capabilities and protocols for determining when to defer to human experts or other specialized systems. This awareness of limitations is as crucial as the knowledge itself.

Layer 5: Integration with Human Expertise

The final layer creates seamless pathways for human-AI collaboration. This involves developing specialized interfaces and protocols that allow expert LLMs to effectively complement human expertise rather than replace it. The focus is on creating synergistic relationships where AI systems can augment human capabilities while learning from ongoing human input and oversight.

A New Paradigm for AI Development

This perspective suggests a fundamental shift in how we think about AI development. Rather than trying to create digital oracles that contain all human knowledge, we should focus on developing specialized systems that can effectively collaborate with human experts and each other.

The challenge isn’t just technical – it’s epistemological. We need to deeply consider what constitutes expertise and how it can be effectively transferred to AI systems. This might require entirely new ways of thinking about knowledge representation and learning.

Looking Ahead

Our current moment in AI development is both exciting and humbling. While we’ve made remarkable progress in creating systems that can process and synthesize information in sophisticated ways, we’re also becoming more aware of the vast ocean of human knowledge that remains to be captured and understood.

The real breakthrough will come not from trying to encode all human knowledge into AI systems, but from developing frameworks that allow AI to work alongside human expertise in increasingly sophisticated ways. This approach acknowledges both the power and limitations of current AI technology while pointing the way toward more effective human-AI collaboration.

As we continue to advance in this field, maintaining this balanced perspective will be crucial. It allows us to appreciate the remarkable capabilities of LLMs while remaining clear-eyed about their limitations and the work that still lies ahead.

The goal isn’t to replace human knowledge with artificial intelligence, but to create systems that can effectively amplify and complement human expertise. In doing so, we might discover new ways of understanding and utilizing the vast repository of human wisdom that exists both online and off.